|

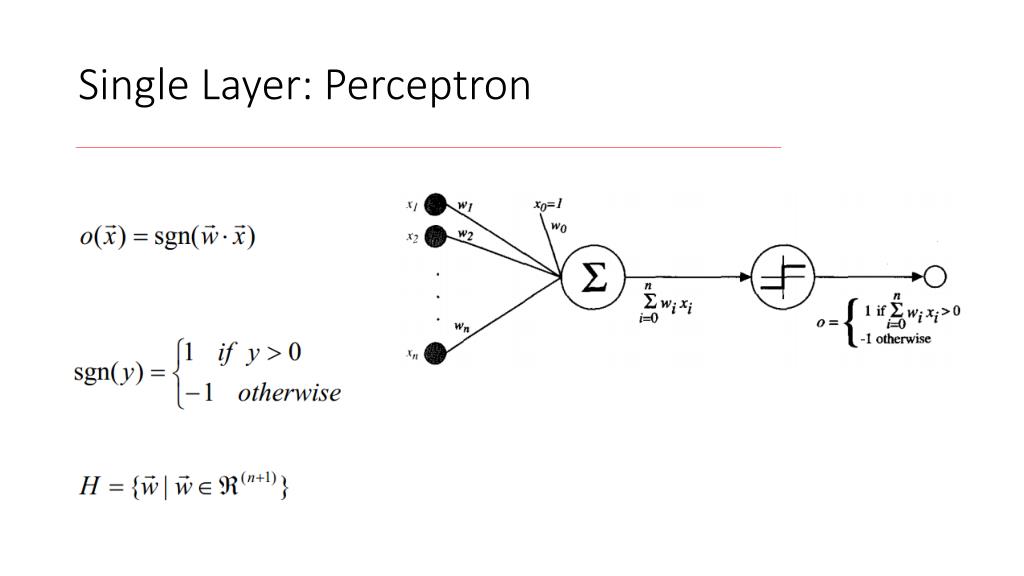

Perceptron is another rather simple linear classifier that forms the foundational unit of the so-called artificial neural networks, which well look at later in this course. The algorithm starts with all the weights initialised to 0 ie w 0. Perceptron can take both real values and boolean values as inputs for the classification. So strictly speaking would not belong to pure linear methods anymore. Perceptron Algorithm: Now that we know what the w is supposed to do (define a hyperplane that separates the data), let’s look at how we can get such w. known variables, so this result and test holds regardless of the learned weights and therefore regardless of the optimization algorithm of choice. More modern variants of the algorithm also introduce non-linearity with kernel functions.

Note that the theorem talks only about coefficients, i.e. Shifting bounds for on-line classification algorithms ensure good performance on any sequence of examples that is well predicted by a sequence of changing. Hence there are infinite solutions, because once I find a solution, for the weights for row 1, I can write infinite sets of weights, multiples of the solution found, that will work as parameters for row 3. Moreover, we can see that the number of unknowns is 4, less than the rank. Perceptron algorithm: Finds a separating hyperplane by minimizing the distance of misclassified points in T to the decision boundary (i.e., the hyperplane). I can rewrite one as a multiple of the other). We can see that both, the coefficient matrix (input 1 + input 2 column) and the augmented matrix (input1 + input 2 + output) have rank 3, since row 1 and row 3 are not indipendent (i.e. the Perceptron algorithm the simplest possible eviction policy, which discards a ran- dom support vector each time a new one comes in, we achieve a shifting.

Let's consider a very simple function, the XOR function in the image below. More precisely, it is a direct consequence of the Rouché–Capelli theorem.Ī simple or multilayer perceptron is nothing but a system of linear equations, in which the input set represent the coefficient matrix, the input set plus the targets represent the augmented matrix and the perceptron weights are the free parameters to solve.Īs the theorem states, if the 2 matrices have the same rank, there is at least one solution, and if the rank of the 2 matrices is less than the number of unknowns, the solutions will be infinite in number. The problem of multiple existing solutions is not uniquely related to the optimization algorithm of choice, it is actually many times related to the problem itself.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed